Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

JOEL

This week we look at:

- Anthropic’s $1.5B PE joint venture and ten new financial-services agents

- Goodfire’s research showing AI thought has a curved geometric structure

- Goldman Sachs’ projection of 24x token consumption growth by 2030

Claude is coming for financial services

Jamie Dimon (CEO of JPMorgan) opened the week by admitting he’d spent part of his Sunday evening building a live dashboard of asset swaps, bid-ask spreads and bank liquidity in Claude Code, by himself, in about twenty minutes. Anthropic ran two events this week, “The Briefing: Financial Services” filled a venue in Manhattan with Dario Amodei, Dimon, and customer segments from Goldman Sachs, JPMorgan, AIG and BNY. On 6 May, Code w/ Claude, their developer conference, unfolded in San Francisco with Managed Agents, Routines, overnight self-improvement they call Dreaming, and a disclosure that API traffic is up 17x year on year.

Two announcements anchored the push into financial services. The first was a joint venture with Blackstone, Hellman & Friedman and others, committing roughly $1.5 billion to deploy Claude across PE-backed portfolio companies, with forward-deployed Anthropic engineers embedded alongside sponsor operating teams. CEO Dario Amodei claimed that Anthropic’s go-to-market organisation is “half a thousand going on a thousand” people, while peer software companies of similar revenue have sales teams of 50,000. The only way to close that gap is to borrow distribution, and PE sponsors sit on hundreds of mid-market portfolio companies desperate for margin.

The second announcement was the release of a financial services reference solution: ten agents covering pitchbook assembly, credit memos, KYC screening, month-end close and similar workloads, packaged as Skills, MCP connectors and Agent SDK templates. It is, frankly, a light piece of engineering. Most of it is YAML, prompts and standard connector wiring to PitchBook, FactSet and SharePoint. As a product, it is underwhelming, but as a marketing effort it makes sense.

Anthropic’s FS customer adoption map, shown at the Briefing, catalogues more than fifty workflows already running across lending, risk and compliance, investing and research, insurance, client operations and engineering, under the headline “your teams aren’t waiting, here’s what they’re building”. BNY was named as operating digital “co-workers” that staff can effectively hire to handle DDQ responses, reconciliations and onboarding end-to-end.

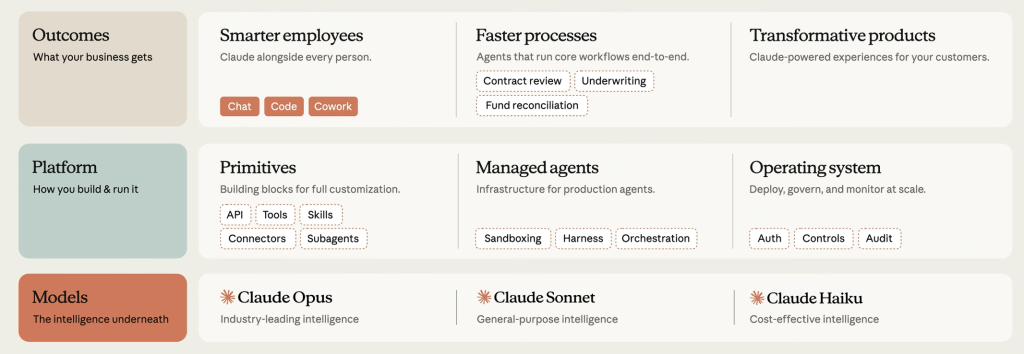

Anthropic is now presenting an enterprise architecture for agents: Outcomes at the top (Smarter employees, Faster processes, Transformative products), Platform in the middle (Primitives, Managed agents, Operating system, complete with Auth, Controls and Audit), and the Opus, Sonnet and Haiku models at the base. Many of the boxes are still aspirational. Sandboxing, harnesses, orchestration and audit are named, not built out to the standard a Tier 1 bank’s second line would accept.

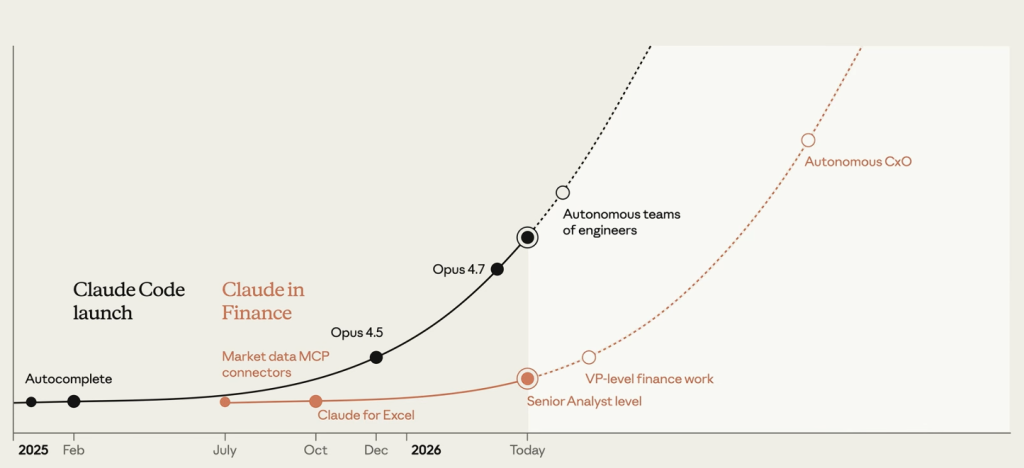

Anthropic also casually overlaid Claude Code’s trajectory, from autocomplete in early 2025, through to autonomous teams of engineers today, on a second curve for Claude in Finance. The two curves are offset by 10 to 12 months and the implications are that this “curve of autonomy” is now starting to play out in finance, with today’s customer-adoption map looking much like Claude Code in late 2024.

But questions of job destruction and security were never far from the presentations. Dario spent part of the Briefing on Mythos. The next-generation model, he said, has already found around 300 vulnerabilities in Firefox in open testing and thousands more behind closed doors. He framed Chinese frontier labs as six to twelve months behind, which is the window we should be working to get our security in order.

Takeaways: Anthropic believes financial services are next, and very much hopes they are from a revenue perspective. If enterprises can learn to harness these models effectively, the autonomy wave that remade software in a year could wash through front and back office work just as quickly. But beyond the models themselves and the Claude Code harness, what was actually demonstrated this week is still extremely light. An enormous amount of build and adoption work remains, and it is not as if the incumbent enterprise software vendors are offering the infrastructure either. Anthropic is showing a destination; the road to it is still largely unpaved.

The geometry of AI thought

Mechanistic interpretability, or mech-interp, is the attempt to work out what is actually happening inside a neural network. Most AI engineers treat models as black boxes that take an input and return an output. Mech-interp opens the box. We’ve covered this topic multiple times, starting roughly two years ago with Anthropic’s Golden Gate Claude, the moment a single internal “feature” was amplified until the model could not stop talking about the bridge. That result showed that concepts can appear as identifiable features in a model’s internal representations, and that amplifying those features can change behaviour. The field has been trying to read those structures ever since.

Two new pieces of work push the conversation forward this week. Anthropic has built what it calls a natural language autoencoder. The method is roughly this: take an activation (mathematical “neurons” firing) from inside a running model, ask a second model to describe in English what that activation represents, then test the description by reconstructing the original activation from the text. If the round trip works, the explanation has captured the information. Applied to Claude, the method surfaces things the model does not say out loud.

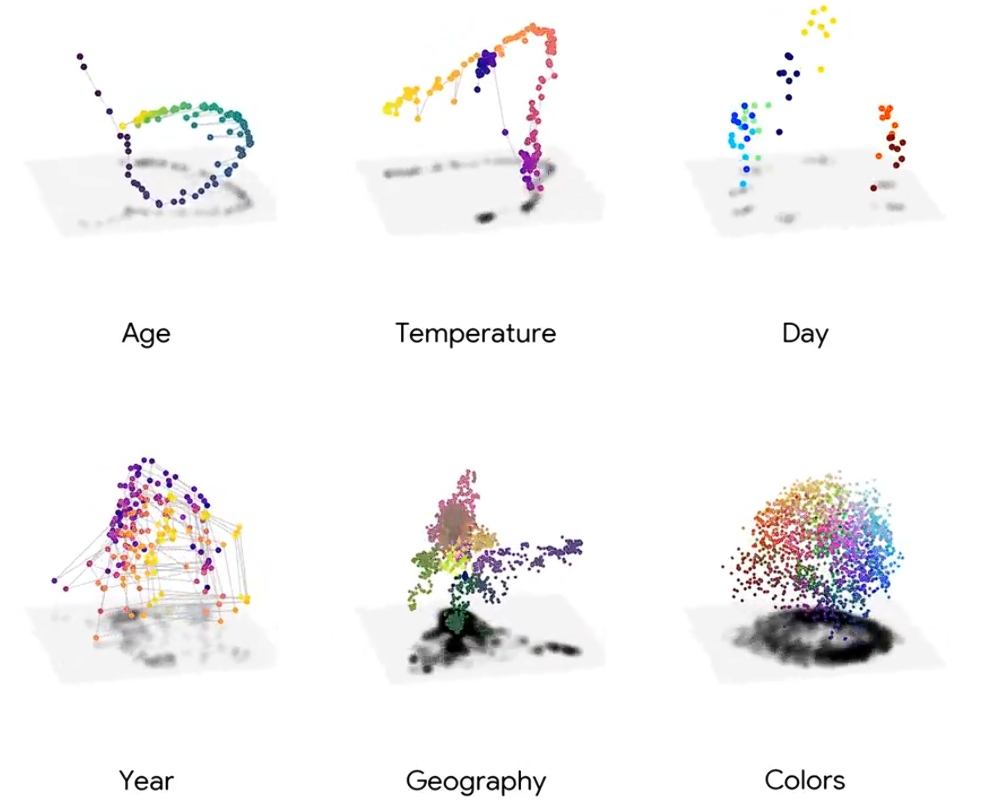

Goodfire is a San Francisco interpretability company founded in 2024, now valued at over a billion dollars. In new research they are tackling the interpretability question from a different angle. Activations inside transformer models are vectors living in spaces of thousands of dimensions. Goodfire has been exploring what shape those activations can take. Across controlled tasks, Goodfire finds that activations do not simply scatter randomly. They often sit on structured, curved geometries: months and weekdays form cycles; sequential concepts trace paths; graph tasks produce graph-like structures; physical simulations produce trajectories that respect the dynamics of the system. In separate biological work on Evo 2, a genomic foundation model trained on DNA from more than 100,000 species, Goodfire found that phylogenetic relationships are encoded geometrically in the model’s internal representations.

This level of interpretability is useful when we want to actively steer models. Steering is the act of nudging a model’s internal state at inference time to change its behaviour, without retraining. The industry standard typically treats internal model concepts as directions, straight lines you push along. Goodfire’s work shows that when concepts are curved, straight-line steering walks off the surface into regions where the model produces incoherence. Following the geometry gives steering that is more reliable and more precise. For safety, alignment, and commercial control of model behaviour, that is a significant practical advance.

The harder question is what the geometry means. Elan Barenholtz, the cognitive scientist, argues that the shapes are not something the model consults while thinking. They are properties of frozen weights, visible only because activations pass through them. His thesis goes further: the existence of these structures (and indeed the fact that language models can self-generate coherent text from them) suggests language itself has a property of predicting its own continuations. If he is right, these geometries are the structure of language and data, not the structure of thought. Goodfire has not claimed otherwise. What they have shown is that this structure, whatever its ultimate nature, is causally navigable.

If large models trained on reality must compress reality to predict it, the shapes inside them are the compression artefacts and geometries of the world itself. Goodfire’s pilot on Alzheimer’s detection found a new class of biomarkers hiding in the geometry of a blood-test model. Their work with the Arc Institute recovered phylogenetic structure from a genomic model. The geometry was a path to new scientific knowledge, and this seemingly esoteric line of research may be opening up a new way to do science.

Takeaways: Most AI value today is extracted from automating coding and general knowledge work: the first-order win of a technology that can imitate human labour at scale. Reading the geometry inside models offers something stranger and potentially more profound. If these shapes encode structure that the world imposed on the training data, then interpretability becomes a scientific instrument for surfacing new regularities. That is a move from AI as a faster version of what we already do, to a microscope for a hitherto invisible world.

EXO

Goldman Sachs analyses the AI build-out

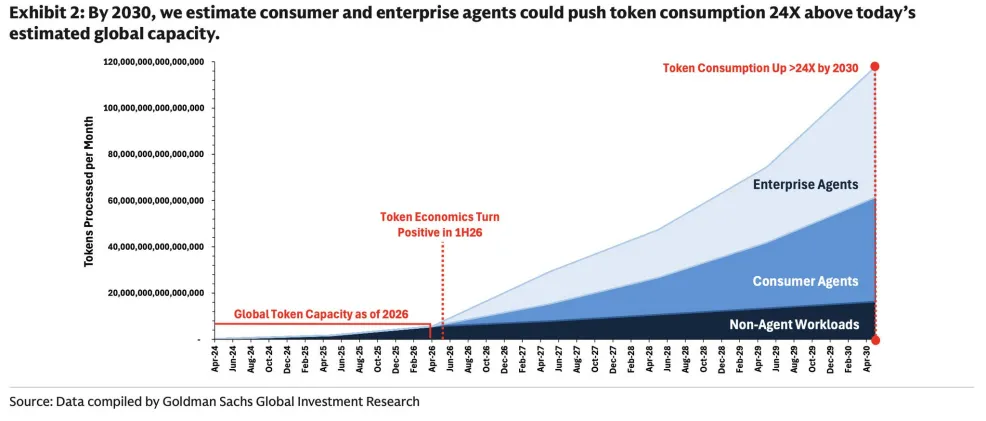

Our chart this week is from Goldman Sachs’ recent research on the AI infrastructure build-out. It shows their baseline projection for global token consumption rising 24x above today’s capacity by 2030, driven largely by enterprise and consumer agents coming online from mid-2026 onwards.

This 24x number, based on current industry projections, looks remarkably low from where we sit. Over the last six months, agentic tasks that were previously economically viable have moved from tens or hundreds of thousands of tokens per run to hundreds of millions. ExoBrain consultants are burning around a billion tokens each per month, and if this were to be scaled up to the world’s knowledge workers, the demand would be orders of magnitude higher than Goldman models.

Nonetheless, Goldman’s report is worth reading. The report rightly identifies the real battleground as the physical and institutional constraints on the build-out, chip obsolescence cycles, data centre design churn, power queues, permitting, specialised labour and component supply shocks. If these constraints are overcome, the demand will be there.

Weekly news roundup

AI business news

- Cloudflare says AI made 1,100 jobs obsolete, even as revenue hit a record high (Cloudflare’s admission that AI eliminated 1,100 roles while simultaneously hitting record revenue is the clearest real-world data point yet on how AI restructuring and growth can coexist on the same balance sheet.)

- OpenAI launches new voice intelligence features in its API (OpenAI’s new voice intelligence API features signal a direct push to make real-time audio a developer-layer commodity, expanding the competitive surface well beyond text-based AI.)

- Investor letter: TCI cuts Microsoft stake citing AI risks (A major institutional investor slashing its Microsoft position from 10% to 1% over AI-specific risks to Office and Azure is a rare, concrete signal that smart money is beginning to price in AI disruption as a liability, not just an asset.)

- Datadog’s stock jumps amid AI boom (Datadog’s 31% stock surge on earnings shows that AI infrastructure monitoring, not just AI model-building, is emerging as one of the most durable business models in the current wave.)

- Spotify wants to become the home for AI-generated personal audio (Spotify’s move to position itself as the platform for AI-generated personal audio represents a strategic bet that distribution and personalization infrastructure matter more than who creates the content.)

AI governance news

- Trump jumps from ‘anything goes’ to ‘strict regulation’ AI policy (The administration is reportedly studying executive orders for FDA-style pre-deployment safety reviews of frontier models, with Anthropic’s Mythos capabilities cited as a trigger for the rapid 180-degree pivot.)

- EU clinches deal to roll back AI restrictions (The EU’s formal deal to roll back AI Act restrictions is the most consequential regulatory reversal since the Act passed, and compliance timelines professionals have been building toward may now need to be rebuilt from scratch.)

- Five Eyes spook shops warn rapid rollouts of agentic AI are too risky (A joint advisory from CISA and the Five Eyes intelligence alliance warning against rapid agentic AI rollouts carries rare cross-border regulatory weight, effectively putting enterprise AI deployment teams on notice.)

- Pennsylvania sues Character.AI, claiming its chatbots illegally hold out as licensed professionals (Pennsylvania’s lawsuit against Character.AI, the first governor-led action alleging an AI chatbot illegally practiced medicine, establishes a legal theory that any consumer-facing AI with health or advisory capabilities now has to take seriously.)

- AI Legislative Update: May 8, 2026 (Connecticut’s comprehensive AI bill reaching the governor’s desk and Iowa signing a chatbot safety law in the same week marks the moment US state-level AI regulation shifts from proposals to enforceable law.)

AI research news

- Design Conductor 2.0: An agent builds a TurboQuant inference accelerator in 80 hours (An autonomous multi-agent system designed a working LLM inference accelerator chip in 80 hours from a paper alone, a concrete milestone showing AI closing the gap on end-to-end hardware engineering.)

- VibeServe: Can AI Agents Build Bespoke LLM Serving Systems? (VibeServe challenges the assumption that one-size-fits-all serving infrastructure is optimal, showing agents can auto-synthesize bespoke LLM serving stacks, a direct threat to years of hand-tuned engineering investment.)

- AI Co-Mathematician: Accelerating Mathematicians with Agentic AI (The AI Co-Mathematician paper makes a specific empirical case for where agentic AI accelerates real mathematical research workflows, giving practitioners a concrete model for domain-expert plus AI collaboration.)

- Hallucinations Undermine Trust; Metacognition is a Way Forward (This ICML 2026 position paper reframes the hallucination problem as a metacognition failure, knowing what you don’t know, which has immediate implications for anyone building reliable AI pipelines.)

- RemoteZero: Geospatial Reasoning with Zero Human Annotations (RemoteZero achieves geospatial reasoning from satellite imagery with zero human annotations, opening a path to AI-powered earth observation at scale without the labeling bottleneck that has stalled the field.)

AI hardware news

- Claude hitches a ride on SpaceX’s datacenter capacity (Anthropic taking the full 220,000-GPU Colossus 1 facility plus 300+ MW of new inference capacity addresses the rate-limit crunch driving recent Claude tier cuts, with multi-gigawatt orbital compute also under discussion.)

- AMD puts out new slottable GPU for AI-curious enterprises (AMD’s new MI350P (144GB HBM3e in a dual-slot PCIe card) signals a direct play for enterprises that want AI acceleration without ripping out existing server infrastructure, creating a real alternative to Nvidia’s locked-in ecosystem.)

- Nvidia to invest up to $2.1bn in neocloud Iren, funding deployment of up to 5GW of Nvidia DSX-aligned compute (Nvidia taking a $2.1B equity stake in a neocloud operator marks a structural shift from chipmaker to vertically integrated infrastructure investor, with 5GW of committed capacity that will shape who controls AI compute supply for years.)

- US suspects Nvidia chips smuggled to Alibaba via Thailand (If confirmed, the alleged smuggling of export-controlled Nvidia servers to Alibaba via a Thai AI initiative would represent the most significant enforcement failure of U.S. chip controls to date, with direct implications for the entire export regime.)

- OpenAI and Broadcom in discussions over financing of $18bn custom chip project – report (An $18B custom chip project backed by Broadcom, with Microsoft purchase commitments as collateral, signals that OpenAI is now seriously engineering its way out of Nvidia dependency, a move that would reshape the GPU market’s demand curve.)