Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

JOEL

This week we look at:

- Anthropic’s Claude Mythos and what its cyber capabilities mean for the stability of digital life

- The Blackwell GB200 hardware recipe behind the latest step change in model quality

- Why Google dominates AI compute on paper but not where it now matters most

A model too powerful to release

In late February, Anthropic was under siege. President Trump had ordered all federal agencies to stop using the company’s products. The Pentagon had designated it a “supply chain risk,” the first AI company to receive that label, after Anthropic refused to remove restrictions preventing its models from being used in mass surveillance or autonomous weapons systems.

What almost nobody outside Anthropic knew at the time was that, internally, the company had just finished post-training a model that would shock even its own researchers. Claude Mythos had completed its training run, and initial evaluations were coming back with numbers that didn’t look right. SWE-bench Verified jumped from 80.8% to 93.9%. CyberGym went from 66.6% to 83.1%. Anthropic’s system card would later say the model “saturates many of our most concrete, objectively-scored evaluations.” It is, by a considerable margin, the most capable AI system known to exist.

Following the accidental leak two weeks ago, on 7 April, Anthropic finally went public with what turned out to be a warning. Project Glasswing is a coalition including Amazon, Apple, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, with over 40 additional organisations given access to Mythos under controlled conditions. Their purpose: to scan and harden the world’s critical software before anyone else gets a model this powerful. Because in the weeks between finishing post-training and going public, Mythos had found thousands of zero-day vulnerabilities across every major operating system and web browser. It found a 27-year-old vulnerability in OpenBSD and a 16-year-old flaw in FFmpeg hidden in code that had been hit five million times by automated testing tools without detection.

Within 48 hours, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned the CEOs of America’s systemically important banks to the Treasury building. Jane Fraser of Citi, Ted Pick of Morgan Stanley, Brian Moynihan of Bank of America, Charlie Scharf of Wells Fargo, and David Solomon of Goldman Sachs all attended. Jamie Dimon couldn’t make it, though JPMorganChase was already a Glasswing launch partner. The purpose, according to Bloomberg and Reuters: to ensure financial institutions understood what Mythos and comparable models could mean for their exposure to attack.

So what can we make of this? The model was likely never intended for general availability. Its destiny was more likely as a teacher: a source of synthetic training data and distillation targets for smaller, more economically viable models. One also wonders: if Anthropic has had Mythos internally since February, why hasn’t its own output reflected this step-change in capability? To be fair, the company shipped 74 releases in 52 days between February and March. But shipping features is not the same as shipping transformative products.

That gap between model capability and real-world product delivery matters for how we think about the threat. Because if Anthropic, with direct access to Mythos and every incentive to move fast, still faces friction in turning raw capability into shipped products, we should expect a similar friction on the offensive side. Finding vulnerabilities is not the same as orchestrating a coordinated attack campaign. Target selection, exploit chaining, lateral movement, operational security, infrastructure management, money laundering: like product development, these still require substantial judgment and coordination.

But the general concern felt in the tech community is valid. In product development, the bottleneck is taste, design, market fit, user experience, things that are subjective, high-dimensional, and stubbornly resistant to automation. In offensive cyber operations, the bottleneck is more mechanical. And the data shows it is already being ground down. CrowdStrike’s 2026 Global Threat Report shows average breakout time, the interval between initial compromise and first lateral movement, fell to 29 minutes in 2025, down from 48 minutes in 2024 and 98 minutes in 2021. Check Point reported on an orchestration framework called Hexstrike-AI that directed over 150 specialised AI agents to autonomously scan, exploit, and persist inside targets, tasks that took human operators days completed in under 10 minutes.

The AI cyber risk clock was already ticking, but now we know the worst case is possible. Anthropic’s own red-team lead has said comparable offensive capability could appear in other models within 6 to 18 months. But distillation, architectural improvements, and the sheer pace of competition mean that window is optimistic. And it assumes no more leaks — a shaky assumption given how Mythos itself came to light. If OpenAI’s or Google’s most capable internal models were similarly compromised, the timeline collapses.

For the past two decades, the implicit contract of digital life has been that platforms stay up, trust is cheap, and security can be treated as a line item rather than a strategic concern. That contract held because the economics of attack held: exploits were expensive, skilled attackers were scarce, and most targets weren’t worth the effort. Every layer of the modern digital economy was built on that assumption of affordable, ambient stability. Mythos doesn’t just threaten individual systems; it threatens the economic foundation underneath them. When the cost of finding and weaponising vulnerabilities collapses, the implicit trust we place in platforms and vendors stops being a reasonable default and starts being a liability. That shift is permanent: we are not going back to a world where security is easy because attacks are hard.

So what does the next five years look like? Broadly, five scenarios. The optimistic case is managed hardening, where Glasswing-style coalitions continuously scan critical software before release, patch times collapse, and the economy pays a much larger security premium in exchange for genuine resilience. The most likely, in our assessment, is permanent breach: offensive capability diffuses faster than defenders can patch, hyperscalers retreat into hardened cores, and the long tail of smaller organisations, hospitals, schools, and neglected open-source dependencies lives with persistent fraud and downtime. Munich Re already warns that digital supply chain breaches are “more the norm than the exception”. A third path is the monoculture, where sensitive workloads migrate into attested confidential computing environments controlled by a handful of hyperscalers and model creators; security improves inside the core but concentration risk simply migrates from open-source dependencies to model access. A fourth is geopolitical cyber war, with states treating frontier AI as strategic assets, exploits hoarded more selectively, and critical infrastructure defence merging with national security planning. The tail risk is cascading shock: a shared identity provider or software distribution channel is compromised at scale before defenders adapt. The IMF has warned a systemic cyber incident affecting financial market infrastructure could threaten stability, and the Bessent-Powell meeting suggests the people closest to the plumbing are taking that risk seriously now.

There is a near-term silver lining. The current dynamic resembles a “U-shaped curve”: adversary operators are racing to extract value from existing zero-day stockpiles before defensive scanning discovers them, while their promised pipelines of automated AI exploit development keep slipping. Defensive AI is increasingly finding the same vulnerabilities attackers are hoarding — “bug collisions” that mark down the value of existing assets via patching waves. Mythos doesn’t kill the zero-day market, but it deflates it like a bubble: the scarcity premium that made these exploits valuable evaporates. The next 6 to 9 months may be a defender’s window, not an attacker’s paradise, with the real winners being organisations in Glasswing-style consortia and anyone controlling frontier AI for offence.

Global cybersecurity spending hit $240 billion in 2026, up 12.5% year on year, with security consuming 8-12% of enterprise IT budgets and 10-15% in financial services. Meanwhile, AI-assisted code generation is pushing the cost of development down toward $10 per hour of equivalent human output. Two curves are crossing: build costs falling, security costs rising. Within two to three years, security could become the dominant cost in software delivery for many organisations. That’s a structural shift in the economics of building software, and it arrives at precisely the moment when agentic engineering and “vibe coding” are encouraging people to ship faster with less rigour.

There is an emerging counter-strategy worth watching. The zero-dependency approach, where AI agents rewrite library functionality from specifications and test suites rather than pulling in external packages, eliminates supply chain risk. Research from the University of Texas found that 20% of AI-generated package references point to non-existent libraries, and 43% of those hallucinated dependencies recur consistently enough for attackers to pre-register them. The most valuable investment right now might not be in AI tooling or security products alone. It might be in recreating more software from scratch and committing more fundamentally to the agentic software age.

Takeaways: The window to act is short and it is measurable: perhaps months before Mythos-class offensive capability proliferates beyond the controlled coalition now using it defensively. Personal security hygiene is the immediate first step (Andrej Karpathy’s digital security checklist, covering hardware keys, password managers, encrypted messaging, and device minimisation, has become the reference guide and is worth following this weekend). For organisations, the priority is harder but clear: audit your dependencies, invest in specifications and test coverage, embed security architecturally into your build pipelines rather than bolting it on after the fact, and stress-test your assumptions about the platforms you depend on.

The Blackwell recipe behind it

For several months in late 2025 and early 2026, somewhere between 2% and 4% of the world’s entire AI compute capacity was pointed at a single training run. One lab assembled an estimated 150,000 of Nvidia’s latest Blackwell GPUs (equivalent to 600,000 of last generation’s H100s) and as we cover in the lead story this week, produced a model so capable it couldn’t be released. Here’s how.

Start with the silicon. Most leading models you use today, from GPT-5.4 to Claude Opus, were pre-trained on older Nvidia Hopper chips with 80 GB of fast memory per GPU. It’s not widely understood that until very recently most of the models we use are still based on older pre-trains. Mythos is the first frontier model where the entire pipeline, pre-training, post-training and alignment, was built end-to-end on Blackwell GB200 superchips. Each GPU packs 192 GB of HBM3e memory (2.4x more), connected via NVLink with double the bandwidth, in purpose-built NVL72 racks of 72 GPUs designed for exactly this kind of work. That memory density is what lets you keep a rumoured 10 trillion parameter model’s working set in fast storage rather than constantly shuffling data around. The result: low training instability across trillions of tokens.

Then add the data. The public web corpus is effectively exhausted, so Anthropic blended proprietary datasets with massive synthetic data generated by prior Claude models, a self-improvement loop that every frontier lab is now running. Layer on the largest post-training push we’ve seen: extensive reinforcement learning for code, reasoning and likely many examples of long-running agentic work from us busily using Claude Code, followed by automated Constitutional AI alignment that trains the model to internalise ethical principles rather than just follow rules. The recipe reportedly performed roughly twice as well as Anthropic’s own scaling laws predicted. Something qualitatively different happened at this scale.

And Anthropic won’t be alone for long. xAI is scaling Colossus 2 toward a million GPUs with plans for 10 trillion parameter models of its own. OpenAI’s next frontier model, codenamed Spud, completed pre-training in late March at the Stargate facility in Abilene, Texas using over 100,000 GPUs. It’s rumoured to be GPT-5.5 or GPT-6, natively multimodal, and is expected within weeks. OpenAI killed Sora and redirected compute to get it done. Runs of 100,000 to 500,000 GPUs are fast becoming table stakes, and Nvidia’s next generation Vera Rubin chips ship later this year promising another 3 to 5x leap.

Takeaways: The AI capability frontier doesn’t advance gradually. It moves in hardware generations. Mythos is the first proof that Blackwell-class compute creates a visible discontinuity in model quality, and every major lab is now racing to finish its own Blackwell-era training runs. The window before those models arrive is roughly 6 months. As we suggest in the lead story, prepare accordingly.

EXO

Who owns the silicon?

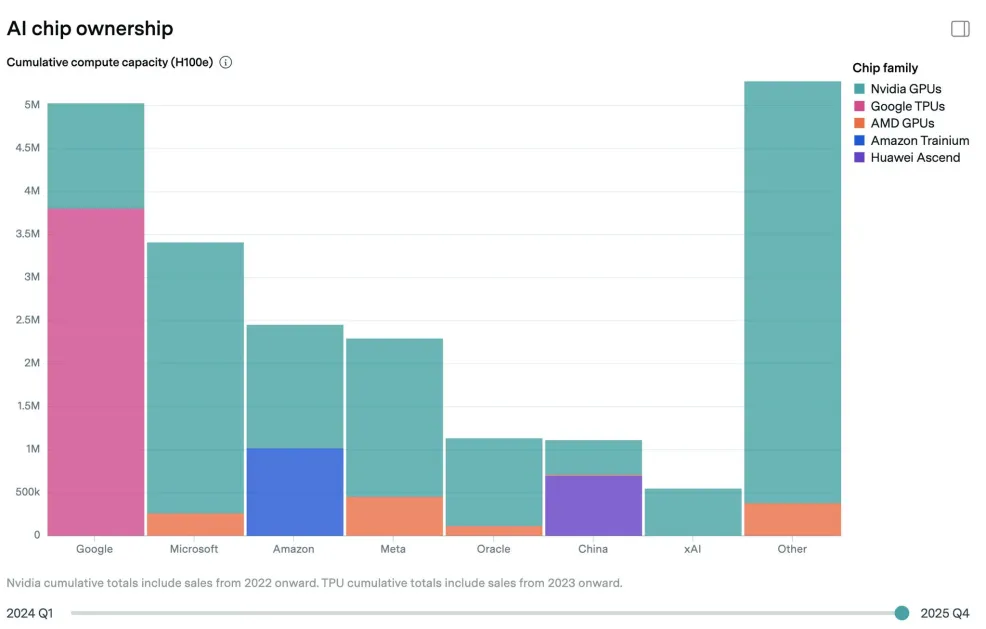

This week’s chart is from Epoch AI’s brand new Chip Owners Explorer. Google dominates global AI compute ownership with roughly 5 million H100-equivalents, more than Microsoft, and far more than Amazon, Meta or Oracle (and the whole of China). But look closely at the colour. That enormous pink block is TPUs, Google’s custom chips. Strip those out and Google’s Nvidia GPU fleet is relatively modest.

This matters because we’re entering the GB200 NVL72 era. Nvidia’s rack-scale Blackwell systems are delivering big gains for Anthropic. Google’s TPU v6 Trillium chips are strong on price-performance and power efficiency, drawing 300W versus Blackwell’s 1,000W+ per chip. Google claims 4x better cost efficiency than H100 for large language model workloads. But the GB200 NVL72 isn’t an H100. It’s a different beast entirely, and Google’s Ironwood (TPU v7) response is only just arriving.

The chart shows Google owns the most AI compute, but compute isn’t static. If Blackwell’s rack-scale architecture delivers for others as it has for Anthropic, then Microsoft, Meta, Amazon and Oracle, all overwhelmingly Nvidia customers, could see their effective compute surge past Google’s installed base.

Owning the most chips isn’t the same as owning the best ones.

Weekly news roundup

AI business news

- Meta’s Muse Spark is here — and it’s closed source (Meta’s first model from its new MSL lab, released April 8, marks a clear break from Meta’s historic open-weights stance. Closed source at launch, though Meta hints at open-weights for future versions.)

- Anthropic buys Coefficient Bio for $400M (Anthropic’s biggest move into biopharma — a stealth startup with fewer than 10 employees from ex-Genentech founders. Extends the Claude Life Sciences line launched in October 2025 and signals Anthropic is willing to make vertical bets, unusual for a lab focused on horizontal capabilities.)

- OpenAI launches $100/month ChatGPT Pro tier with 5x Codex usage (OpenAI slots a mid-tier between $20 Plus and $200 Pro, mirroring Anthropic’s Max pricing. 10x promotional boost through May 31. OpenAI says Codex usage has risen 70% month-on-month — a direct competitive response to Claude Code.)

- Shopify launches AI Toolkit for Claude Code, Cursor, Codex, and VS Code (Shopify’s official toolkit, open-sourced under MIT, bundles 16 skill files covering the Shopify platform — agents can now build apps and manage stores via natural language. A clear signal that e-commerce platforms now treat agent-readiness as a core surface area.)

- Milla Jovovich launches MemPalace AI memory tool (The Hollywood actress pivoting to AI startups is less notable than what MemPalace is — a consumer memory layer for AI agents, the latest bet that persistent context becomes the product moat as models themselves commoditise.)

AI governance news

- CIA trusts AI to help analyse intel from human spies (The US intelligence community’s most sensitive data stream — HUMINT — is now being processed through AI. A significant escalation from the previous lines around signals intelligence and open source.)

- Gen Z workers actively sabotaging their company’s AI rollout (Fortune’s reporting on deliberate resistance — deleting training data, feeding bad prompts, refusing to document workflows — is the first organised evidence that the displacement anxiety we’ve written about is now expressing itself as shop-floor action.)

- Elon Musk’s xAI sues Colorado over AI anti-discrimination law (xAI filed in US District Court challenging Senate Bill 24-205, arguing the disclosure requirements violate the First Amendment and create a “patchwork” of conflicting state rules. The first AI lab to move the permissionless-innovation-vs-accountability fight from the legislature into the courtroom.)

- Nineteen new US state AI bills passed into law in recent weeks (State-level AI legislation has jumped from 6 new laws to 25 since mid-March, with 27 more bills through both chambers. Transparency disclosures, chatbot rules, deepfakes and health-insurance prior-authorisation restrictions dominate. The federal preemption framework is running into a wall of already-enacted state law.)

- Maine sends AI therapy chatbot ban to governor (Maine follows Illinois in banning AI-based therapy services, with Missouri pursuing a similar ban through its omnibus healthcare bill. This is rapidly becoming the first clear category of AI-specific prohibition in US law.)

AI research news

- Memento: Microsoft’s chain-of-thought memory framework (Microsoft’s Memento extends the effective output length of reasoning models by splitting chain-of-thought into blocks and summaries, evicting older blocks from the KV cache to continue from the summary in shorter context — a direct attempt to solve context rot in long-running agent deployments.)

- DEMASK: dependency-guided parallel decoding for diffusion language models (A lightweight dependency predictor that attaches to the final hidden states of a diffusion LM, achieving 1.7–2.2x speedup on Dream-7B while matching or improving accuracy. Diffusion LMs are closing the efficiency gap with autoregressive models.)

- daVinci-MagiHuman: single-stream audio-video generative foundation model (SII-GAIR and Sand.ai’s open 15B model synchronises text, video and audio through a single Transformer — a structural simplification over the cross-attention architectures that currently dominate. Generates 5 seconds of 256p video in 2 seconds on a single H100.)

- BloClaw: an omniscient multi-modal agentic workspace for scientific discovery (A unified operating system for AI4S that replaces fragile JSON tool-calling with an XML-Regex dual-track protocol (0.2% error rate vs 17.6% for JSON), with runtime state interception via Python monkey-patching. Benchmarked across cheminformatics, protein folding and molecular docking.)

- Ontology-constrained neural reasoning in enterprise agentic systems (A neurosymbolic architecture implemented in Foundation AgenticOS, using a three-layer ontological framework to ground LLM reasoning in formal domain knowledge. Evaluated across 600 runs in five industries, showing significant gains on regulatory compliance and role consistency — greatest where LLM parametric knowledge is weakest.)

AI hardware news

- Amazon’s ‘Project Houdini’ reinvents how data centres are built (AWS’s new modular approach is aimed squarely at the build-time problem — traditional hyperscale data centres take years to stand up, and AI demand can’t wait that long. The first hyperscaler to crack this wins the next five years.)

- OpenAI pauses UK investment deal over energy costs and regulation (OpenAI publicly walking away from UK infrastructure investment over energy prices and regulatory friction is a direct rebuke — and a warning to European governments that the compute they think they can host is far more mobile than they assumed.)

- TSMC’s packaging bottleneck: even US-made chips take a round trip to Taiwan (CNBC’s deep dive on advanced packaging reveals that Nvidia has reserved the majority of TSMC’s most advanced capacity, and even US-fabricated chips still need to fly to Taiwan for packaging. A stark reminder that reshoring compute is more complicated than onshoring wafer production.)

- Half of planned US data centre builds delayed or cancelled (Power infrastructure shortages and Chinese component bottlenecks are biting hard. Only one-third of the 12 GW of capacity expected to come online in 2026 is currently under active construction. The AI build-out is running into physical reality faster than most forecasts assumed.)

- ITIF: Four reasons new AI data centres won’t overwhelm the electricity grid (A rare contrarian voice against the power-crisis consensus. ITIF argues demand-side flexibility, non-coincident peaks, hyperscaler on-site generation and accelerating grid investment mean the doom-scenario numbers overstate the risk. A useful counterweight given how much policy is being driven by worst-case forecasts.)