Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

JOEL

This week we look at:

- What the Spud and Mythos leaks from OpenAI and Anthropic tell us about the next generation of frontier models

- Why Sanders and AOC’s data centre moratorium bill matters even though it will never pass

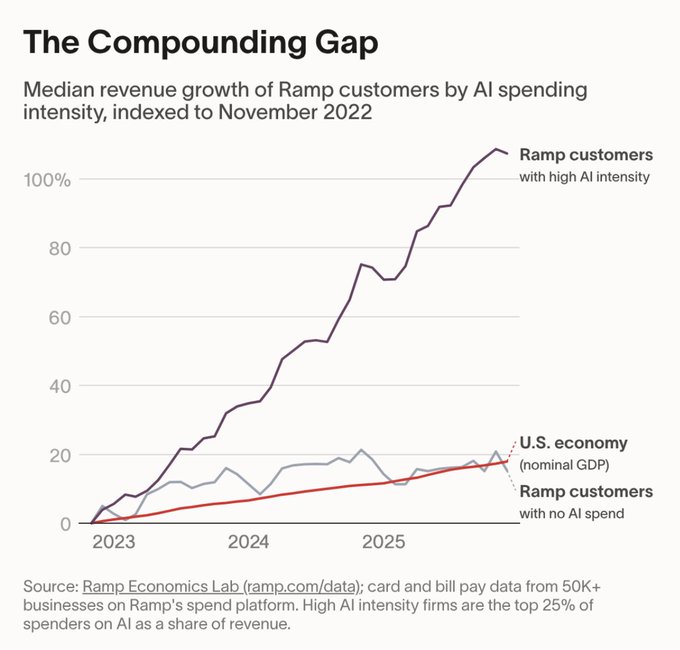

- A Ramp chart showing AI-heavy companies growing twice as fast — and what that really means

New models Spud and Mythos leaked

Two new words entered the AI lexicon this week: Spud and Mythos. They are the codenames for what appear to be the next frontier models from OpenAI and Anthropic respectively, and their emergence tells us a lot about where the AI race is heading, even if the details remain thin. Spud surfaced through a report in The Information, which revealed that OpenAI has completed pretraining on a model that Sam Altman described internally as “very strong” and capable of “really accelerating the economy.” Mythos, meanwhile, was never meant to surface at all. Fortune’s Bea Nolan discovered nearly 3,000 unpublished assets sitting in a publicly accessible cache on Anthropic’s own website, including draft blog posts describing a model called Claude Mythos that sits in a new tier above Opus, codenamed Capybara. The documents describe it as “far ahead of any other AI model in cyber capabilities” and warn of unprecedented security risks. Anthropic confirmed the model exists and blamed human error.

The strongest signal that Spud is real came not from the leak itself but from the sacrifice that accompanied it. OpenAI killed Sora, its AI video tool, shocking partners including Disney and scrapping what was reportedly a billion-dollar content deal. Sora was costing around $500,000 a day in compute and its own lead admitted the economics were “completely unsustainable.” Fidji Simo’s internal message was blunt: “We cannot miss this moment because we are distracted by side quests.” You don’t blow up a Disney partnership for a side project. Whatever Spud is, OpenAI is betting the company’s near-term trajectory on it.

The natural question is: what will more intelligence actually mean? Altman’s language around Spud echoes OpenAI’s GDPval benchmark, which measures model performance on real-world professional tasks across 44 occupations. Previous results showed impressive speed and cost improvements on individual documents and deliverables. But high-value knowledge work was never really about producing the documents. It’s about deciding what to prioritise, collaborating across complex networks, understanding who needs what and why, and navigating the subtle barriers that sit between a good output and a successful outcome. GDPval doesn’t measure any of that.

This is the reality that anyone running multi-agent workflows today already knows. We have, in many ways, an abundance of intelligence. Claude Opus 4.6 and GPT 5.4 can handle remarkably ambiguous briefs and produce sensible strategies nine times out of ten, up from perhaps four out of ten with the previous generation. The bottleneck has moved. It now sits with the human operator, who is managing tens or hundreds of parallel agent threads, each of which periodically blocks because it needs a strategic decision, a piece of tacit context, or a judgement call that only the person who understands the full landscape can make. Tiago Forte’s recent work on the AI Second Brain captures this well: as agents do more work, they surface more decisions, and those decisions are harder because they’re the ones machines can’t yet make alone. You find yourself in a relentless stream of high-stakes choices, several per minute, and it is draining.

Both labs clearly see this. Anthropic’s Cowork and Claude Code, and OpenAI’s planned superapp combining ChatGPT, Codex and the Atlas browser, are attempts to solve the “harness” problem rather than the intelligence problem. A recent Anthropic engineering post showed how the jump from Opus 4.5 to 4.6 allowed them to strip out entire scaffolding layers because the model could sustain coherent, long-running work without them. More capable models need less orchestration, or at least shift the orchestration upward from granular task management to higher-level oversight. If Spud or Mythos represent another such jump, the combination of smarter models and smarter harnesses could push us closer to genuinely autonomous knowledge work. We may not yet know what we don’t know about what these models can perceive.

But there are two elephants in the room. The first is cost. Current subscription prices are heavily subsidised. A $200 Claude Max account almost certainly consumes thousands of dollars of compute each month. With the Iran conflict pushing gas prices up, this age of abundance is unlikely to last. The leaked Mythos materials suggest it won’t be widely available initially due to its complexity and cost. The second elephant is safety. If Mythos genuinely represents a step change in understanding code at depth, and therefore in finding vulnerabilities, Anthropic faces a direct tension with its own Responsible Scaling Policy, which commits to pausing development if safety measures can’t keep pace. That commitment becomes harder to honour with an IPO on the horizon.

Takeaways: At ExoBrain, we focus on what persists regardless of how smart the models get. More intelligence won’t solve the problem of getting the right data to the right agent at the right time; a model doesn’t know what it doesn’t know, however capable it becomes. Nor does it solve the challenge of supporting humans who must manage many parallel workstreams and make constant strategic decisions. Build for orchestration, context management, and human facilitation. Don’t solve problems that more compute will eventually handle. And as the era of cheap tokens likely draws to a close, encode more of your workflows in fast, deterministic code, reserving frontier model capabilities for the work that truly demands them.

Democrats bet on data centre anger

Senator Bernie Sanders and Representative Alexandria Ocasio-Cortez introduced the AI Data Center Moratorium Act this week, a bill that would freeze all new data centre construction in the United States until Congress passes comprehensive AI safety legislation. The conditions for lifting the moratorium are extensive: government pre-approval of AI products, guarantees that data centres won’t raise electricity prices, union labour requirements, and protections against job displacement. It is, by any measure, an ambitious piece of legislation.

It is also almost certainly going nowhere. Republicans control both the House and the Senate, and the Trump administration is actively championing data centre expansion. Even if Democrats retake the House in the November midterms, as many forecasters predict, the bill would need to be reintroduced in the new Congress in January 2027. It would then face a Senate filibuster requiring 60 votes to overcome, and a near-certain presidential veto. Realistically, legislation of this kind cannot become law before 2029 at the earliest, and only then with a sympathetic president.

So why does it matter? Because the frustration it channels is real and growing. Over 230 community and environmental groups across 24 states have called for a national moratorium. In the states where data centres are actually being built, public opinion is sharply negative: 52% of Americans oppose construction near where people live, and 64% cite rising utility costs as their primary concern. Abigail Spanberger won the Virginia governorship last year by making data centres “pay their fair share” a central campaign message. Democrats are paying attention.

The pattern here echoes what we have covered before in ExoBrain: AI’s impacts are global and largely invisible to most people, but where they land locally, they land hard. National polls show voters are broadly neutral, even mildly positive, about data centres. But in Northern Virginia, rural Georgia, and central Indiana, people are turning up to town hall meetings angry about noise, water use, and electricity bills. Politicians respond to that intensity, not to national averages. And with the Iran conflict pushing energy prices higher, every new data centre becomes harder to justify to local communities already feeling the squeeze.

Takeaways: The Sanders moratorium bill is political positioning, not pending legislation, but it reflects a genuine and bipartisan grassroots backlash against data centre expansion. For anyone who uses AI services, wherever they are in the world, the politics of American data centres is now directly relevant to the infrastructure you depend on.

EXO

Are some firms reaping an AI dividend?

This week’s chart comes from Ramp Economics Lab, which tracks real card and bill pay data from over 50,000 businesses. They split their customers by AI spending intensity (the top 25% of spenders on AI as a share of revenue versus those spending nothing) and indexed median revenue growth from November 2022. The result is striking. High AI intensity firms have roughly doubled their revenue. Those with no AI spend have barely kept pace with US nominal GDP at around 20%. The gap has been widening sharply since mid-2024.

The obvious question: does AI spending drive growth, or do fast-growing companies simply spend more on AI? A February 2026 study from the Bank for International Settlements offers a useful reference point. Using data from 12,000 European firms and an instrumental variable approach to isolate causation, researchers found AI adoption increased labour productivity by around 4%. That’s a real, measurable effect, but a long way from the 100% gap in Ramp’s chart. The likely truth sits between the two. AI does appear to help, but the firms spending the most are probably already more adaptive, more tech-forward, and more growth-oriented.

Weekly news roundup

AI business news

- OpenAI upgrades Codex to automate your workflows – and compete better with Claude Code (OpenAI push plugins to challenge Claude Code and Cowork’s lead.)

- Researchers: AI isn’t killing jobs, it’s ‘unbundling’ them (Rather than wholesale job elimination, the pattern emerging is decomposition — AI absorbs specific tasks within roles while creating demand for new task bundles, reshaping jobs rather than replacing them.)

- Anthropic’s Economic Index: “Learning Curves” (The fifth economic report finds Claude used more intensely in high-income countries and by knowledge workers, with API workflows increasingly automating directive tasks like customer service billing — raising questions about whether AI amplifies existing economic advantages.)

- Google acquires ProducerAI, expands Lyria 3 music generation in Gemini (Google adds AI music creation to Gemini with 30-second tracks and SynthID watermarking — another step toward Gemini as the everything app, and another creative domain where AI tools are arriving faster than licensing frameworks.)

- US startup funding slows sharply in March (After record January-February megarounds including OpenAI’s $110B raise, March funding dropped to ~$13B — the decline concentrated at late-stage, while seed and early-stage dealmaking holds steady.)

AI governance news

- Judge blocks Pentagon’s effort to ‘punish’ Anthropic by labelling it a supply chain risk (A federal judge grants an injunction against the Pentagon’s supply-chain-risk designation of Anthropic, backed by an amicus brief from nearly 150 retired judges who called it a serious overreach of executive authority.)

- GitHub: We’re going to train on your data after all (From April 24, Copilot Free, Pro, and Pro+ user interaction data — inputs, outputs, code snippets, file names, repo structure — will train AI models by default. Business and Enterprise tiers exempt. Opt-out available but enabled by default.)

- LiteLLM hacked: malware steals user credentials via compromised dependency (The popular open-source AI proxy was hit by malicious code injected through a software dependency, stealing login credentials. Its SOC2 and ISO 27001 certifications, provided by startup Delve — itself facing allegations of misleading clients — are now under scrutiny. A sharp reminder that supply chain security in the AI toolchain is only as strong as its weakest link.)

- US states push AI transparency and deepfake bills as federal preemption looms (Illinois advances mandatory AI disclosure under its Consumer Fraud Act, Hawaii passes minor protection and suicide prevention protocols for AI, and multiple states introduce provenance data requirements for generative AI — all while the White House framework threatens to override them.)

- Illinois bans AI chatbot therapy, deepfake protections pass unanimously (HB 883 prohibiting AI chatbot therapy passed the Illinois House 110-23 on March 23, while SB 8 deepfake protections cleared the Senate 45-0 on March 19 — two of the strongest state-level AI restrictions to advance this year.)

AI research news

- Agentic AI and the next intelligence explosion (A new paper examining how agentic AI systems — autonomous, goal-directed agents that plan, reason, and act — could trigger a qualitatively different kind of intelligence scaling, distinct from the parameter-scaling paradigm.)

- Hyperagents (Research into agent architectures that operate above the level of individual LLM calls, coordinating multi-step, multi-tool workflows with planning and self-correction capabilities.)

- LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels (A new world model architecture learning predictive representations directly from pixel input, advancing Yann LeCun’s JEPA vision toward stable, scalable world models.)

- OpenAI: Reasoning models can’t control their chain of thought (Good news for safety monitoring — current reasoning models struggle to hide or obfuscate their intermediate reasoning even when told they’re being observed, suggesting CoT monitoring remains a viable safety mechanism for now.)

- Gemini 3.1 Pro doubles ARC-AGI-2 reasoning score (Google’s Gemini 3.1 Pro hits 77.1% on ARC-AGI-2 — more than double its predecessor — while cutting hallucination rates from 88% to 50% on Vectara benchmarks. A 256K token context window makes it the default starting point for many enterprise use cases.)

AI hardware news

- Arm launches AGI CPU — its first production silicon in 35 years (A 136-core data centre processor on TSMC 3nm, co-developed with Meta and designed for agentic AI orchestration. Partners include OpenAI, Cerebras, and Cloudflare. Arm projects $15B in revenue from AGI CPU alone by 2031 — the biggest strategic bet in the company’s history.)

- Nvidia’s $20B Groq deal produces its first chip at GTC (The Groq 3 LPU, manufactured by Samsung on 4nm, is Nvidia’s first rack-scale product built around non-GPU silicon. Paired with Vera Rubin NVL72 it delivers 35x higher throughput per megawatt — a clear pivot from training dominance to inference economics.)

- Micron shatters expectations with $23.9B record revenue as AI memory “supercycle” ignites (Gross margins hit 74.9%, EPS of $12.20 crushed the $8.80 consensus. HBM3E ramp-up gave Micron a competitive edge with 30% less power consumption — confirmation that the real AI hardware bottleneck has shifted from compute to memory and packaging.)

- Broadcom flags TSMC capacity as a bottleneck choking 2026 AI chip production (TSMC is hitting production limits for the first time in recent memory, with PCB lead times for optical transceivers stretching from 6 weeks to 6 months. Broadcom’s AI semiconductor revenue hit $8.4B in Q1, up 106% YoY — demand is outrunning every layer of the supply chain.)

- The “AI Wall”: grid access halts chip deployment despite stockpiled GPUs (Power grid limitations are physically preventing AI infrastructure from scaling. Morgan Stanley forecasts a 49 GW shortfall in US data centre power access by 2028 — shifting builds to power-rich regions away from traditional hubs like Northern Virginia.)