Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

JOEL

This week we look at:

- How the Iran war is exposing AI’s dependency on helium and natural gas

- MiniMax’s M2.7 and the frontier model that participated in its own creation

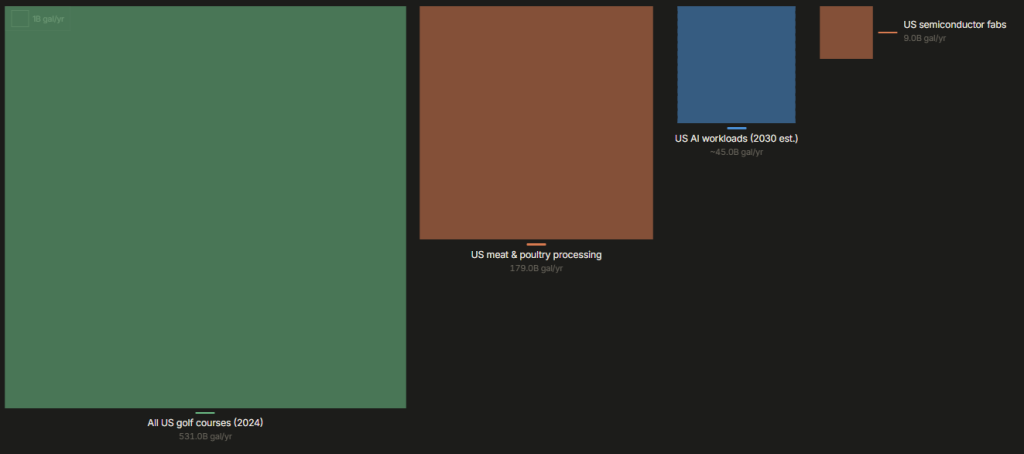

- A new visualisation putting AI water consumption in perspective

Will AI run out of gas?

Three weeks into the war in Iran, the human cost is mounting. People are being displaced, cities are under bombardment, and the Strait of Hormuz, through which 20% of the world’s oil passes each day, has become highly contested. Our thoughts are with those caught in the violence. But as the crisis unfolds, it is exposing something the AI industry has been slow to confront: the current boom runs on gas, and its supply is now under severe threat. This is not metaphorical gas, but actual gas.

Helium is one of the most critical industrial gases on Earth. Semiconductor fabrication at the cutting edge, the sub-5-nanometre processes used by TSMC to produce every Nvidia AI accelerator and every Apple processor, requires ultra-pure helium for wafer cooling during lithography and for leak detection. Without it, the fabs shut down. Qatar is the world’s second-largest helium producer, supplying a third of global output, some 63 million cubic metres in 2025. When Qatar’s Ras Laffan facility, the world’s largest LNG plant, declared force majeure at the start of the conflict on February 28, it removed 5.2 million cubic metres of helium from the global market every month. Prices have already doubled.

What makes helium uniquely vulnerable is its physics. It is the second lightest element in the universe. Its molecules are so small they can escape even the most sophisticated storage systems. It has the lowest freezing point of any element, just four degrees above absolute zero, so it cannot be easily liquefied for transport. The global supply chain operates on roughly 45 days of buffer before existing inventory boils off. You cannot stockpile helium for a rainy day. If disruption lasts 60 to 90 days, prices could surge another 25-50%, potentially exceeding $2,000 per thousand cubic feet, more than four times pre-war levels. South Korea sources 65% of its helium from Qatar. Taiwan is similarly exposed. TSMC says operations are normal “for now,” but Fitch Ratings warned of “rising tail risk” and Tom’s Hardware put the chip supply chain “on a two-week clock” back on March 11.

There is some resilience built in. The US is the world’s largest helium producer. Algeria and Russia produce meaningful volumes. Air Liquide operates an underground storage facility in Germany with a capacity of 47 million cubic metres per year. Leading-edge fabs can recycle 80-90% of their helium. But Fitch have noted that chipmakers may be forced to prioritise high-margin AI chips over everything else, which would squeeze consumer electronics and automotive sectors to keep the AI supply chain alive.

Natural gas generated 40% of US electricity in 2025, making it the single largest power source in the US. And a rapidly growing share of that electricity flows straight into AI data centres. Gas-fired projects directly linked to US data centres have surged 25-fold in just two years, with over a third of all new US gas demand now driven by data centres. Elon Musk’s xAI operates Colossus, the world’s first gigawatt-class AI data centre, in Memphis, Tennessee, running on gas turbines. The backbone of the AI inference boom is natural gas, likely >50% of all US inference is gas-powered and that is set to rise to >60% as new builds come online.

The US is technically self-sufficient in gas thanks to the shale revolution. But self-sufficiency and price insulation are not the same thing. Gas is a global commodity. When Qatar’s LNG exports go offline, and Qatar supplied a fifth of the world’s LNG, European and Asian buyers scramble for alternatives. US producers see international prices spike and look to export more. Domestic prices follow. WTI crude has surged 40% since the war began, exceeding $100 a barrel. Dutch TTF gas prices are up 50-60%. US gasoline costs have risen 17%. These translate directly into higher operating costs for every data centre in America, and every AI inference call those data centres serve.

So what does this mean for the trajectory of AI? In the short term, the impact is somewhat manageable because helium buffers can hold for a few more weeks and US gas supply is robust even as prices climb. But the AI industry has built its future on 20th-century energy and materials infrastructure, and that infrastructure passes through some of the most geopolitically exposed corridors on Earth. The mechanisms for resilience exist but need activating. Helium recycling rates at leading fabs already reach 80-90%, and supply diversification towards the US, Algeria and Russia is underway. On the energy side, the push for nuclear and renewables for data centres has never looked more urgent. But for every organisation that depends on AI, and that is increasingly all of us, the real message from the Iran war is about concentration risk: chip fabrication in Taiwan, helium in Qatar, energy in fossil fuels, and AI compute in a handful of hyperscaler clouds. Too many eggs in too few baskets.

Takeaways: The Iran war is a huge stress test for the AI supply chain, and it is revealing how deeply it depends on physical resources. For businesses and technologists building AI resilience plans, the playbook is diversification: local inference on capable open-weight models that can run on modest hardware; multi-cloud and on-premises strategies that reduce dependency on any single provider or region; and a hard, honest look at the energy assumptions underpinning your AI strategy. The future of AI is not just about scaling LLMs. It is about whether gas keeps flowing.

The model that built itself

Last week we looked at Andrej Karpathy’s autoresearch, a simple script that runs ML experiments while you sleep. This week, a Chinese AI lab called MiniMax showed us what happens when you scale that idea up to frontier model development. Their new model, M2.7, comes with a detailed, first-hand account of how it participated in its own creation.

The workflow is relatively simple. A researcher on MiniMax’s reinforcement learning team starts by discussing an experimental idea with the model. From there, M2.7 takes over: it reviews the literature, tracks the experiment spec, pipelines the data, launches the training run, monitors progress, reads logs, debugs failures, analyses metrics, and submits code fixes. The human researcher only steps back in for critical decisions. MiniMax says the model now handles 30-50% of the daily workflow that previously required multiple researchers across different teams.

But the more interesting part is what happens next. They gave M2.7 a specific task: optimise a programming scaffold. The model ran autonomously for over 100 rounds, following a loop of analysing failures, planning changes, modifying code, evaluating results, and deciding whether to keep or revert each change. It discovered optimisations the team hadn’t specified, like systematically searching for optimal sampling parameters and adding cross-file bug pattern detection. The result was a 30% performance improvement. No human touched the loop.

Then they pushed further. They pointed M2.7 at 22 machine learning competitions and gave it 24 hours to iterate autonomously. After each round, the model wrote a memory file, criticised its own results, and fed those reflections into the next attempt. Its best run earned 9 gold medals, 5 silver, and 1 bronze, a medal rate tying with Google’s Gemini 3.1.

What makes this useful beyond the AI world is the pattern it reveals. Any iterative R&D process, whether drug discovery, financial modelling, or product development, follows the same basic loop: plan, execute, analyse, review, iterate. MiniMax has shown that AI can already own the middle of that loop autonomously, while humans hold the edges. That boundary will shift, but the shape of the collaboration is becoming clear.

Takeaways: MiniMax’s M2.7 gives us the first detailed blueprint for how AI labs are using models in their own development. The most productive teams will be the ones that learn to hand over the middle of the loop, the execution, monitoring, and analysis, while focusing human attention where it still matters most, at the creative and strategic edges.

EXO

Water footprints in context

The chart this week comes from Andy Masley, a researcher who has spent the past year challenging claims about AI’s environmental footprint. His interactive visualisation compares US water consumption across industries using public supply (potable) water. US meat and poultry processing consumes 108 billion gallons per year. All US golf courses drink 48 billion. Semiconductor fabs use 9 billion. And all US AI workloads? And all US AI workloads projected to use 45 billion by 2030.

But experts caution against dismissing concerns outright. “In the near term, it’s not a nationwide crisis,” says Cornell professor Fengqi You. “But in locations that have existing water stress, building these AI data centres is gonna be a big problem.” Jonathan Koomey, a leading researcher on data centre efficiency, agrees: “Each situation has to be evaluated in the context of the specific design of the facility being proposed.”

Weekly news roundup

AI business news

- Astral to join OpenAI (The creators of uv and Ruff — now foundational to modern Python development — joining OpenAI’s Codex team is the clearest signal yet that AI coding assistants are acquiring the developer tooling layer, not just wrapping it.)

- Cursor Composer 2 is just Kimi K2.5 with RL (Cursor building its $30B+ valuation on a fine-tuned open-source Chinese model raises pointed questions about what “proprietary AI” actually means when the base model is open-weight Kimi K2.5.)

- Bezos launches $100B AI manufacturing initiative through Amazon Industrial AI (Bezos committing $100B to AI-driven manufacturing is the largest single corporate bet on physical-world AI deployment, dwarfing the data centre investments that dominated 2025.)

- DoorDash launches Tasks app, paying users to train AI by running real-world errands (DoorDash paying gig workers to generate real-world training data through structured errands inverts the typical AI data pipeline — and creates a new category of human-in-the-loop labour.)

- PwC fires staff who refuse to use AI tools, memo reveals (PwC explicitly firing staff for refusing AI tools is the first major professional services firm to make AI adoption a condition of employment — a threshold that will be watched across the industry.)

AI governance news

- Speeding Up the “Kill Chain”: Pentagon Bombs Thousands of Targets in Iran Using Palantir AI (Democracy Now’s investigation into how Project Maven — using Palantir and Claude — is accelerating kill chains in Iran, including the girls’ school strike that killed over 170 people, is the most detailed public account yet of AI-enabled targeting in a live war.)

- White House calls on Congress to preempt all state AI laws (The White House explicitly calling for federal preemption of all state AI laws is the most consequential U.S. AI governance move since the Biden executive orders were revoked — and would override California’s SB 1047 and every other state-level effort.)

- EU AI Act enforcement begins with first compliance audits (The EU’s first formal AI Act compliance audits mark the transition from legislation to enforcement — companies now face real regulatory consequences, not just future obligations.)

- 150 retired judges file amicus brief supporting Anthropic in Pentagon lawsuit (Nearly 150 retired federal and state judges backing Anthropic against the Pentagon’s supply-chain-risk designation signals that the legal establishment views the government’s action as a serious overreach of executive authority.)

- Jensen Huang proposes paying workers in AI tokens at GTC (Nvidia’s CEO floating a model where workers are compensated partly in AI compute tokens reframes the relationship between labour and compute in a way that could reshape compensation structures across the tech industry.)

AI research news

- EnterpriseOps-Gym (ServiceNow’s EnterpriseOps-Gym exposes a critical gap: existing benchmarks don’t capture the stateful, multi-step complexity of real enterprise workflows, making current agent evaluations unreliable guides for actual deployment decisions.)

- Autonomous Agents Coordinating Distributed Discovery (MIT’s ScienceClaw+ Infinite shows autonomous agents conducting decentralized scientific research by exchanging artifacts — an early signal of what fully uncoordinated AI-driven discovery pipelines could look like at scale.)

- Inducing Sustained Creativity and Diversity in Large Language Models (Harvard’s new decoding scheme unlocks sustained creative diversity from LLMs — producing as many conceptually unique results as desired without repetition, directly useful for anyone using LLMs for ideation, research exploration, or design.)

- OpenClaw-RL: Train Any Agent Simply by Talking (OpenClaw-RL treats every agent interaction — conversations, terminal commands, GUI clicks — as a training signal, enabling agents that improve simply by being used, with zero manual reward engineering.)

- EvoX: Meta-Evolution for Automated Discovery (EvoX jointly evolves both candidate solutions and the search strategies used to generate them, outperforming AlphaEvolve and OpenEvolve across nearly 200 real-world optimisation tasks — a direct successor to the autoresearch pattern.)

AI hardware news

- US charges three tied to Super Micro Computer with helping smuggle billions of dollars of AI chips to China (The indictment of three individuals for allegedly smuggling billions in Nvidia AI chips to China is the most consequential hardware export-control enforcement action since export restrictions began — and signals prosecutors are now targeting supply chain actors, not just end users.)

- Nvidia updates data center product roadmap following LPU launch at GTC 2026 (Nvidia’s post-GTC roadmap committing to annual GPU and LPU architecture releases redefines competitive cadence in AI hardware, putting pressure on every rival planning multi-year product cycles.)

- Samsung Elec to supply HBM4 chips to OpenAI, South Korean paper says (Samsung winning an HBM4 supply agreement with OpenAI would mark a major comeback for a company that spent 2025 struggling to pass Nvidia’s quality bar — and reshapes the memory supply chain for frontier AI training.)

- Meta Is Developing 4 New Chips to Power Its AI and Recommendation Systems (Meta designing four proprietary chips in parallel — rather than buying off the shelf — signals that hyperscalers are now treating custom silicon as a core strategic moat, not a cost-saving afterthought.)

- Musk: “Tesla’s TeraFab AI chip plant to start within a week” – MK (If Tesla’s TeraFab actually breaks ground this week as Musk announced, it becomes the first meaningful attempt by a non-traditional player to vertically integrate AI chip manufacturing at gigafactory scale — a structural bet that in-house silicon is existential for autonomous AI systems.)