Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

Themes this week

JOEL

This week we look at:

- AI’s hidden impact on jobs and the broken ladder for young workers

- Anthropic’s Claude Opus 4.5 striking back against Gemini 3

- Ilya Sutskever on why scaling alone won’t close AI’s capability gaps

Project iceberg reveals AI’s true impact

The framework for understanding AI’s impact on work has been stuck in a paradox… the widespread expectation of rapid automation is contradicted by a lack of material signals in employment and economic data. The standard disruption narrative has struggled to find a footing in the real economy. But a cluster of studies released in the past week may be moving the discussion forward. Four reports from McKinsey, MIT, BearingPoint, and Anthropic are both contradictory and also converge on conclusions that are harder to dismiss.

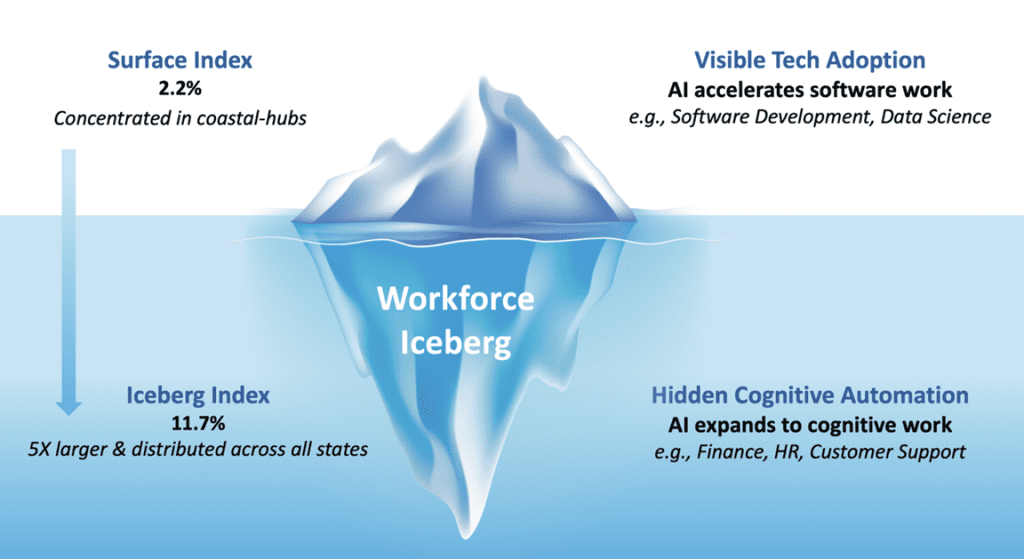

The most visceral framing comes from MIT’s new “Iceberg Index”, a labour simulation tool built with Oak Ridge National Laboratory. Their finding is precise: 11.7% of the US workforce, representing $1.2 trillion in annual wages, can already be replaced by currently available AI systems. This is not a forecast based on hypothetical future capabilities. It describes technology that exists today.

MIT’s researchers draw a deliberate visual distinction. The tech sector layoffs that dominate headlines represent just 2.2% of total wage exposure. This is the visible tip of the iceberg. The submerged mass lies elsewhere: routine functions in human resources, logistics, finance, and office administration. These roles rarely make news when they disappear. They simply stop being filled.

A survey by BearingPoint found that half of global C-suite executives believe their organisations already have 10% to 20% workforce overcapacity “because… AI”. This could be thought of as a kind of “AI-washing”. Are these redundancies genuinely driven by new technology, or are executives using the AI efficiency narrative to justify cuts that have other causes? Many companies over-hired during the pandemic boom. Interest rates rose sharply in 2022, making capital expensive and efficiency attractive. Economic uncertainty around geopolitical tensions, tariffs and trade has made more defensive cost-cutting appealing.

Amazon CEO Andy Jassy indicated that AI would shrink the workforce in the coming years. Then the company announced 14,000 job cuts. Then Jassy clarified that these cuts were “not even really AI-driven, not right now”, attributing them instead to bloated bureaucracy. The technology and the narrative are becoming difficult to separate. What remains clear is the outcome: 48,414 US job cuts cited AI as a factor this year, with 31,000 announced in October alone. Whether the cause is genuine automation or convenient framing, the jobs are disappearing.

Anthropic released research this week analysing 100,000 Claude conversations. They found that AI reduces task completion time by an average of 80%. Legal and management tasks that would take a professional nearly two hours can be completed in minutes. The correlation between high hourly wages and AI-assisted task duration is strong. AI is successfully handling expensive, complex knowledge work, not just routine data entry.

Yet Anthropic’s researchers identify a critical constraint: the bottleneck. While AI accelerates the generation of work, it cannot accelerate the verification or implementation at the same rate. A software developer can produce code far faster with AI assistance, but someone still needs to review it, test it, and integrate it into existing systems. A lawyer can draft a contract in minutes, but someone still needs to check it against the specific circumstances of the deal. A teacher can generate lesson plans instantly, but still needs to stand in front of the classroom.

This may partially explain why the massive productivity gains have not yet appeared in economic statistics. Yale researchers examining aggregate US labour market data found “zero discernible disruption” since ChatGPT launched in November 2022. The technology is accelerating individual tasks, but the human bottlenecks, the judgement, verification, and physical presence that AI cannot replicate, are constraining the system-level impact.

Once those bottlenecks are addressed through workflow redesign rather than just task automation, Anthropic estimates the US could see 1.8% annual productivity growth. This would double the rate seen since 2019, and potentially reverse the productivity stagnation that has persisted since the 2008 financial crisis. Total factor productivity growth has been below 1% for most of the past fifteen years. The prize is substantial, but claiming it requires more than simply deploying AI tools.

The bottleneck insight also illuminates the most troubling pattern in the data: the disproportionate impact on early-career workers. A Stanford study found a 13% relative decline in employment for workers aged 22 to 25 in AI-exposed occupations. Experienced workers in the same fields have not seen equivalent losses. King’s College London research found that firms with high AI exposure cut junior positions by 5.8%, while overall employment fell by only 4.5%. UK tech graduate hiring crashed 46% this year, with a further 53% drop projected for 2026. This is the submerged mass of MIT’s iceberg.

This pattern inverts conventional assumptions about technological disruption. The usual expectation is that younger, digitally fluent workers will adapt more easily while older workers struggle. AI appears to work in reverse. Experience, judgment, and the ability to verify and orchestrate AI outputs now function as a protective shield. Junior employees have traditionally been hired to perform structured, codifiable tasks, precisely the work that AI handles well. If a senior partner can use an agent to complete an associate’s work in minutes, the associate may never be hired.

The problem extends beyond individual job losses. Junior roles are not just cheap labour. They are the training ground where professionals develop tacit knowledge, learn to exercise judgment, and build the relationships that enable senior work. Companies eliminating entry-level positions are breaking their own talent pipelines. The question nobody seems to be asking: where do the seniors of 2035 come from if the juniors of 2025 were never hired? PwC’s global chairman acknowledged this week that AI “may eventually lead to fewer entry-level graduates being hired”. The business logic is clear. The long-term consequences remain problematic.

McKinsey’s report offers a detailed counter-narrative to simple displacement. Their analysis estimates AI could unlock $2.9 trillion in annual economic value in the US by 2030. But this comes with a substantial caveat: the value requires workflow redesign, not just task automation.

Currently, most organisations are layering AI onto existing processes. They automate individual tasks while leaving the surrounding workflow unchanged. McKinsey’s research suggests this approach captures only a fraction of the potential value. The larger gains come from rebuilding workflows entirely around the capabilities of what they call “people, agents, and robots” working together. Agents handle non-physical cognitive work. Robots handle physical tasks. Humans provide judgment, oversight, relationships, and the ability to operate in unpredictable environments.

The shift changes what humans do rather than eliminating what humans do. Roles move from performing work to orchestrating systems that perform work. Managers supervise not just people but hybrid teams of people and AI agents. The skills that matter most are not the ones AI can replicate, such as routine analysis and document preparation, but the ones it cannot: coaching, negotiation, contextual judgment, and building trust.

McKinsey found that 70% of skills used today appear in both automatable and non-automatable tasks. Skills persist, but their applications shift. AI fluency demand has risen sevenfold in two years, the fastest growth for any skill they track. The workforce is splitting between those who can orchestrate AI systems and those whose work AI systems can replace.

Takeaways: The reports this week suggest a focus for business leaders: AI value lies in redesign, not reduction. Companies treating AI as a cost-cutting tool, a way to trim headcount while maintaining existing processes, will capture a fraction of the potential. The $2.9 trillion opportunity belongs to organisations that rebuild workflows from the ground up, repositioning humans as orchestrators of hybrid systems rather than performers of individual tasks. This means investing in AI fluency across the workforce, not just in technical teams. It means rethinking the role of junior employees, perhaps as AI supervisors and output validators rather than task performers, preserving the training function that builds future leaders. It means examining which human bottlenecks constrain the productivity gains AI enables, and redesigning processes to address them. The broken ladder is a choice being made in boardrooms right now, often unconsciously, as companies freeze hiring rather than reimagine what work could become. The organisations that recognise this moment as a redesign opportunity rather than a reduction opportunity will be the ones that capture both the productivity gains and the talent pipeline that sustains them.

Claude fights back on power and price

Just six days after Google unveiled Gemini 3 and claimed the benchmark crown, Anthropic answered with Claude Opus 4.5. We were probably too quick, like everyone else, to suggest the race was over.

On SWE-Bench Verified, the industry standard for coding, Claude Opus 4.5 scored 80.9%, edging past both GPT-5.1’s Codex-Max (77.9%) and Gemini 3 Pro (76.2%). It also leads on Terminal-Bench, which tests a model’s ability to operate a Linux command line autonomously: Claude scored 59.3% against Gemini’s 54.2%. Claude also excels at sustained work. It can maintain reasoning across long sessions, preserving its chain of thought where earlier models would lose the thread. That said, Gemini 3 Pro still dominates on raw reasoning challenges. On Humanity’s Last Exam, Gemini scored 37.5% compared to Claude’s 13.7%. On ARC-AGI, another reasoning benchmark, Gemini reached 31% in standard mode and 45% with its Deep Think feature enabled. Claude trails on both. The picture that emerges is one of specialisation: Claude is the model you want writing and debugging your code, while Gemini currently handles the hardest abstract reasoning tasks. Neither has pulled decisively ahead across the board.

But the real story is efficiency. Claude Opus 4.5 can deliver its performance while using 76% fewer tokens. Superior quality at roughly a quarter to a third of the computational work. Anthropic paired this with a dramatic price cut: from $15/$75 per million input/output tokens to just $5/$25 (slightly more on a par with the $2/$12 of Gemini 3). The previous Opus models were powerful but prohibitively expensive. This one brings Claude-style frontier capability within reach of far more teams and use cases.

November has been a remarkable month. OpenAI, Google, xAI, and now Anthropic have all shipped major upgrades. The progress curve continues upward, with no sign of the ceiling that some predicted. In the domains where these models excel, coding, analysis, tool use, the gains keep coming.

Takeaways: At this price point, with this efficiency, Claude becomes a serious contender for business deployment. Whether it’s writing and reviewing code, browsing the web for research, or working through spreadsheets with the new Excel plugin (we’ve been beta testing this and its incredible for this kind of desktop work), organisations now have a balanced frontier model that won’t burn through budgets. For teams weighing their AI options, Opus 4.5 makes the decision harder in the best possible way.

EXO

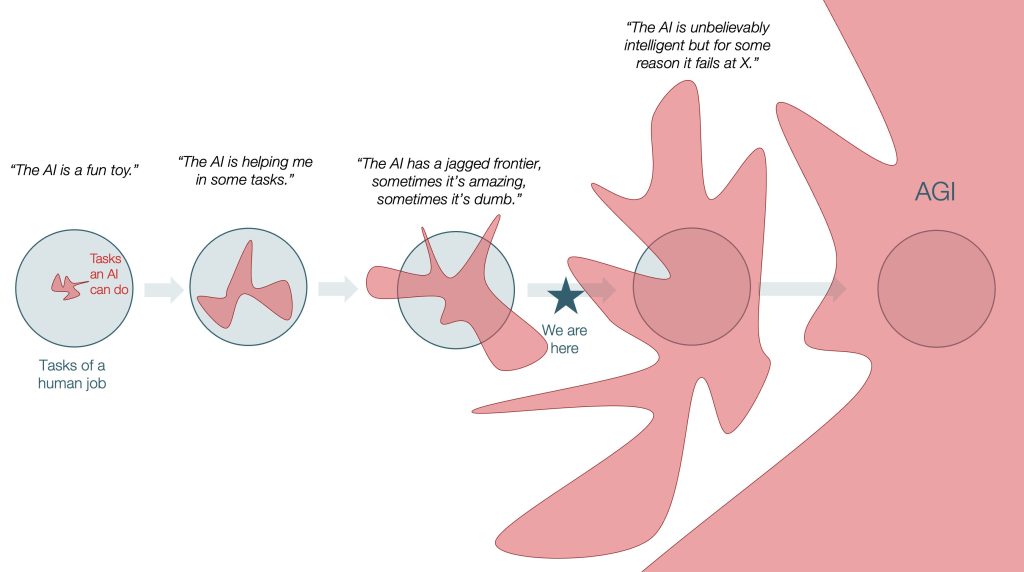

Visualising the jagged frontier

This jagged, spiky shape from Silicon Valley writer and analyst Tomas Pueyo nicely illustrates how AI intelligence has developed and the situation we find ourselves in today: models that excel at specific tasks while failing unexpectedly at other simple challenges. Gemini and Claude ace PhD-level reasoning tests, yet both still make mistakes a child would not.

The moment of the week was undoubtedly ex-OpenAI co-founder and leading AI researcher Ilya Sutskever’s first major public pronouncements since last year, when he declared the end of model pre-training. On the Dwarkesh podcast he doubled down on that view. Pre-training plus ever more compute took us astonishingly far, but he argues the easy wins from “just scaling” are largely behind us. We are now discovering the limits of this recipe through that jagged capability frontier. Sutskever’s claim is that closing those gaps is no longer an optimisation problem, it is a science problem. To move forward, labs will need new ideas about representation, memory and learning, and crucially better generalisation.

The path to AGI requires closing the gaps between the spikes on this chart. Around a year ago, mathematics looked like one of the most difficult areas, yet this week DeepSeek released an open-weight frontier Math-V2 model that can compete at Olympiad level. (Just to put this in perspective, the model scored 118/120 on the Putnam test which far exceeds any of the thousands who try this prestigious competition each year). This is a reminder that some gaps can suddenly disappear when a new idea lands, while others may remain frustratingly wide.

Weekly news roundup

This week’s developments show AI’s accelerating impact on major tech valuations, rising concerns about governance and safety, breakthrough research in agentic systems, and intensifying competition in the AI hardware supply chain.

AI business news

- AI video start-up takes wide-angle view to boost growth (Shows how emerging AI companies are finding novel approaches to compete in the crowded generative video space.)

- Apple’s lousy AI didn’t stop it beating Samsung phone sales (Demonstrates that consumer adoption isn’t solely driven by AI features, offering perspective on market priorities.)

- Alphabet races toward $4 trillion valuation as AI-fuelled gains accelerate (Illustrates the massive financial impact of AI leadership on company valuations and market dynamics.)

- Does the new Flux.2 beat Nano Banana Pro? (Highlights the rapid iteration and competition in open-source image generation models.)

- The Thinking Game review – DeepMind study offers wide-lens view of our tech lords and AGI (Provides cultural perspective on how AI development is being documented and understood by wider audiences.)

AI governance news

- Anthropic, Google Cloud, Quantum Xchange CEOs called to testify on AI cyber threats (Shows increasing governmental scrutiny of AI safety and security concerns at the highest levels.)

- AI slop recipes are taking over the internet — and Thanksgiving dinner (Illustrates the spreading problem of low-quality AI-generated content affecting everyday life.)

- OpenAI denies allegations that ChatGPT is to blame for a teenager’s suicide (Highlights serious legal and ethical challenges facing AI companies regarding user safety.)

- OpenAI dumps Mixpanel after analytics breach hits API users (Demonstrates ongoing security challenges in AI infrastructure and data protection.)

- Lifetime access to WormGPT 4 costs just $220 (Shows the growing accessibility of malicious AI tools and potential security implications.)

AI research news

- Trump’s AI ‘Genesis Mission’: what are the risks and opportunities? (Analyses potential policy shifts that could reshape AI development priorities and funding.)

- Evolution strategies at the hyperscale (Presents new approaches to scaling evolutionary algorithms for large-scale optimisation problems.)

- General agentic memory via deep research (Explores breakthrough methods for giving AI agents more sophisticated memory capabilities.)

- ToolOrchestra: elevating intelligence via efficient model and tool orchestration (Advances the field of multi-tool AI systems coordination and efficiency.)

AI hardware news

- Pollution from coal plants was dropping. Then came Trump and AI. (Reveals the environmental cost of AI’s massive energy demands and policy implications.)

- Tech firms from Dell to HP warn of memory chip squeeze from AI (Signals potential supply chain bottlenecks that could affect AI deployment and costs.)

- Nvidia says its GPUs are a ‘generation ahead’ of Google’s AI chips (Highlights ongoing competition in AI hardware that drives innovation but also market concentration.)

- Taiwan 2025 GDP growth forecast hits 15-year high on surge in AI demand (Shows macroeconomic impact of AI boom on semiconductor manufacturing economies.)

- Chinese regulators block ByteDance from using Nvidia chips, The Information reports (Illustrates geopolitical tensions affecting AI development and technology access.)