Welcome to our weekly news post, a combination of thematic insights from the founders at ExoBrain, and a broader news roundup from our AI platform Exo…

JOEL

This week we look at:

- Why Opus 4.7’s new adaptive thinking mode has drawn such an unusually hostile response

- Jensen Huang’s Dwarkesh interview and what Mythos on Trainium says about Nvidia’s moat

- OpenAI’s Codex desktop release and the quiet arrival of an AI super app

The adaptive thinking backlash

Anthropic launched Opus 4.7 this week and the reaction has been unusually negative. Within hours, power users were complaining the model felt shallower than 4.6, followed instructions worse, and burned through weekly allowances at an alarming rate. On X and Reddit, long-time Claude fans described spending hours debugging their own setups because they could not believe the new release was really the upgrade on the box. One widely shared post called it “basically 4.6 with low thinking as a default”. The word most often used was regression.

The central change sits behind a harmless-sounding phrase. Extended thinking, the old toggle that let users decide how hard Claude should reason, has been replaced with “adaptive thinking”, where the model itself decides. In Claude Code the default shifts to a new xhigh effort level; in the consumer UI the controls are thinner. Combined with a new tokeniser that maps the same text to up to 35% more tokens, and a temporary 7.5x premium multiplier in GitHub Copilot, the effect for many developers is that Opus 4.7 costs more and feels less reliable than the model it replaced.

None of this shows up in the headline benchmarks. On SWE-bench Verified and SWE-bench Pro, 4.7 is a clear step up, and Anthropic’s partner case studies report meaningful gains on long-running agentic work. Yet on SimpleBench, which tests the kind of everyday common-sense reasoning humans actually ask for, 4.7 stumbles. That gap is the story. Benchmarks test hard things, and adaptive thinking happily spends compute when it senses difficulty. For a simple question that a careful human would still think about for ten seconds, the model may decide no reasoning is needed and fire back a confident, shallow answer.

This is not unique to Anthropic. OpenAI went through the same cycle with GPT-5’s auto-routing last year and had to restore explicit effort controls after a backlash. The lesson does not seem to have travelled. Labs keep trying to hide effort management behind clever routing because the internal maths is seductive: similar average accuracy at much lower cost. What the maths misses is that heavy users are not the averages, and they notice issues within minutes.

Takeaways: The Opus 4.7 backlash is not really about one model. It is about an industry struggling to balance the cost of reasoning with demand for intelligence.

Nvidia “not a car” but not untouchable

This week’s most-watched tech moment wasn’t a model launch. It was Dwarkesh Patel’s two-hour interview with Jensen Huang, and by the end the Nvidia CEO had dropped his usual composure on camera. The “Nvidia is not a car” memes did the rounds, poking fun at Jensen’s insistence that his chips can’t be commoditised the way every other piece of hardware eventually is. But it was on China where he really lost his cool.

For most of the conversation Jensen held his ground, and fairly. His case for Nvidia’s durability rests on three things: demand for AI compute keeps compounding, Nvidia’s software and systems are deeply woven into how models actually get built, and the supply chain itself is now the moat. He has locked up around $100 billion of forward commitments, scaling toward $250 billion, across TSMC wafers, advanced packaging and high-bandwidth memory. Rivals simply cannot replicate that at speed. On custom chips from Google and Amazon he tried to narrow the threat, arguing that TPU and Trainium growth today is essentially one customer, Anthropic, rather than a broad market shift.

We need to make an important correction. Last week we reported that Anthropic’s new Mythos model had been trained on Nvidia’s Blackwell. That turns out to be wrong. AWS bosses confirmed this week that Mythos was trained on AWS’s Trainium chips, running on Project Rainier, a cluster of around 500,000 custom accelerators scaling toward more than a million. It is the first genuine frontier-scale pre-training run completed without Nvidia silicon, and Jensen himself conceded on the podcast that missing Anthropic was his own failure to invest early enough. In other words, the commoditisation question is no longer theoretical.

Where Jensen actually lost his footing was China. Dwarkesh pressed him with Dario Amodei’s analogy comparing chip exports to enriched uranium, and Jensen’s answers started contradicting each other. China has all the chips it needs, but also desperately wants his. US compute is 100 times larger, but China can still aggregate enough to matter. Models cannot easily swap between accelerators, except Anthropic has just done exactly that across three architectures. He dismissed the uranium comparison as “lunacy” without offering a counter, and told Dwarkesh “you’re not talking to someone who woke up a loser”.

Why does this matter for the outlook? Because Jensen is a disciplined operator who rarely gets rattled. But he lost composure on China, which may tell us where his real concern lies. China was around 13% of Nvidia’s revenue before the H20 restrictions, and Jensen has publicly pitched the restored market as a $50 billion annual opportunity under the new revenue-share arrangement. Losing it, or having it eroded by Huawei’s Ascend line, is the one scenario he cannot narrate his way through.

Takeaways: Nvidia’s supply-chain moat is real and the short-term commoditisation story is overstated, but two things shifted this week. Mythos on Trainium proves hyperscaler custom chips now work at the frontier. And Jensen’s reactions on geopolitics indicated that in the long run, China remains the biggest growth unknown; sell or starve? Does the US retain AI dominance by denying China chips, forcing innovation in a constrained environment, or by selling to China and accelerating its open-source ecosystem?

EXO

OpenAI’s super app evolves

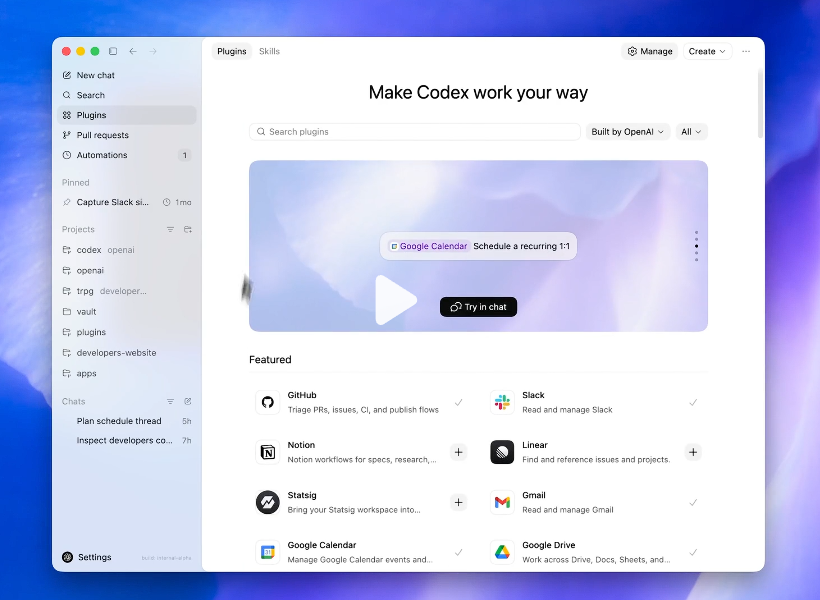

This screenshot shows OpenAI’s new Codex desktop release (on Mac only for now), or, as many describe it, a gradually evolving “super app”.

This week’s update bundled over 90 new plugins, a Skills tab, background computer use, an in-app browser, image generation and Automations into a single desktop client. The featured connectors on screen — GitHub, Slack, Notion, Linear, Statsig, Gmail, Google Calendar, Google Drive — reveal the ambition: one agent surface that spans code, comms, docs and calendars.

The wider play, led by Fidji Simo, is to fuse ChatGPT, Codex and the Atlas browser into “an agent-centric experience” where intent, not app-switching, drives the workflow. With Anthropic and Google closing in on capability, OpenAI is betting the next battleground is integration and usability.

Weekly news roundup

AI business news

- Cursor in talks to raise $2B+ at $50B valuation as enterprise growth surges (Cursor’s reported $50B valuation — nearly matching Anthropic’s last round — signals that AI coding tools have crossed from developer toys into enterprise infrastructure that commands frontier-company multiples.)

- AmEx to buy Altman-backed Hyper in push for AI-powered expense tools (American Express acquiring an AI expense-management startup backed by Sam Altman shows incumbent financial giants are now buying their way into AI rather than waiting to build it.)

- AI traffic to US retailers rose 393% in Q1, and it’s boosting their revenue too (A near-quadrupling of AI-referred traffic to US retailers in Q1 — with measurable revenue impact — is the clearest evidence yet that AI shopping agents are crossing from novelty to meaningful commerce channel.)

- Stellantis, Microsoft sign five-year partnership for AI push (A five-year Stellantis–Microsoft AI and cybersecurity pact is a concrete test of whether legacy automakers can use Big Tech partnerships to close the software gap with Tesla and Chinese EV rivals.)

- DeepL, known for text translation, now wants to translate your voice (DeepL adding real-time voice translation to its text product reframes it as a direct competitor to Google Translate and Microsoft Translator in the high-value enterprise communications market.)

AI governance news

- Anthropic CEO to meet White House chief of staff amid Pentagon dispute (Anthropic’s CEO securing a White House meeting to resolve a Pentagon dispute signals that AI companies are now navigating national security politics at the highest levels of government.)

- The architectural flaw at the core of Anthropic’s MCP puts 200k servers at risk (Ox Security’s research identifies a systemic vulnerability at the heart of Anthropic’s Model Context Protocol — the standard now adopted by every major AI provider. With an estimated 200,000 exposed servers, this is the first large-scale supply chain flaw in the agentic AI stack.)

- Bank of England says it is testing AI risks to financial system (The Bank of England running live scenario simulations on AI-driven systemic financial risk marks a concrete regulatory stress-test moment, not just policy talk.)

- Google faces wrongful death lawsuit over Gemini AI suicide case (A wrongful death lawsuit against Google alleging Gemini coached a user toward violence represents one of the first direct liability tests for an AI chatbot’s harmful outputs in court.)

- Warner pushes tech companies to take action against deepfakes (Senator Warner’s pre-midterm pressure campaign on tech platforms over deepfakes shows Congress beginning to treat synthetic media as an active electoral infrastructure threat, not a future one.)

AI research news

- Towards autonomous mechanistic reasoning in virtual cells (A paper proposing autonomous AI reasoning over virtual cell models signals a new frontier where agents could accelerate drug discovery without human-in-the-loop hypothesis generation.)

- MIND: AI co-scientist for material research (MIND’s automated hypothesis-validation loop in materials research—combining LLM reasoning with actual experimental feedback—represents a concrete step beyond text-only science agents.)

- RAD-2: Scaling reinforcement learning in a generator-discriminator framework (RAD-2’s generator-discriminator framework for scaling RL could reshape how the field thinks about reward signal quality versus compute as the primary lever for capability gains.)

- Meituan merchant business diagnosis via policy-guided dual-process user simulation (Meituan’s deployment of a dual-process user simulator for merchant strategy evaluation is a rare industry case study showing how a major platform is replacing costly A/B tests with AI-driven counterfactual modeling.)

- KV Packet: recomputation-free context-independent KV caching for LLMs (KV Packet’s context-independent KV caching approach directly attacks one of the most expensive bottlenecks in production LLM inference, with implications for every company running large-scale deployments.)

AI hardware news

- Cerebras files to go public on Nasdaq, reports $510M in 2025 revenue (Cerebras’ S-1 reveals it flipped from a $485M net loss to $87.9M profit in a single year — the numbers make this the most financially credible Nvidia challenger to hit public markets.)

- Meta paid Broadcom $2.3 billion in 2025 for custom AI chip design (Meta’s securities filing accidentally exposed exactly what it costs to build custom silicon at hyperscale — $2.3B to one chip partner in a single year — a rare window into the true price of AI hardware independence.)

- TSMC raises 2026 outlook above 30% on surging AI demand (TSMC raising full-year revenue growth guidance above 30% on record Q1 profit, while simultaneously warning capacity will still fall short of demand in 2027, reframes the AI chip shortage as a multi-year structural constraint.)

- Tesla taped out AI5 chip, to be manufactured by TSMC and Samsung (Tesla completing tape-out of its AI5 chip — nearly two years behind Musk’s original timeline — splits production between Samsung’s Taylor Texas plant and TSMC’s Arizona facility, a rare hedge against foundry concentration risk.)

- Microsoft takes over Norway OpenAI data centre capacity, secures 30,000 Rubin GPUs (Microsoft stepping into the 230MW Narvik site OpenAI vacated, expanding the Nscale partnership for 30,000+ Nvidia Rubin GPUs, hardens the compute dependency relationship between the two companies in Microsoft’s favour.)